The similarities between satellite and micrograph imagery analysis.

From macro to micro, the world around us is filled with imagery that holds valuable information waiting to be uncovered. Two seemingly distinct fields, remote sensing and micrograph imagery analysis, have more in common than meets the eye. Both fields rely on the use of advanced technologies to analyze large sets of data and derive meaningful insights.

Remote sensing involves the collection and analysis of data from a distance, typically from satellites or aircraft. This field is commonly used in environmental monitoring, agriculture, and urban planning, among others. On the other hand, micrograph imagery analysis involves the examination of images captured through microscopes to gain insights into structures and processes at the microscopic level. This field is essential in fields such as biology, medicine, and materials science.

While these fields differ in scale, the use of deep learning has enabled similarities to emerge in their analysis techniques. Deep learning is a subset of machine learning that uses neural networks to analyze complex data. This technology has revolutionized the way we analyze imagery and has enabled us to gain insights that were previously impossible to obtain. In this article, we will explore the similarities between remote sensing imagery analysis and micrograph imagery analysis using deep learning.

The complex challenges of Satellite and Micrograph Imagery Analysis.

Interpreting Complex Data

Both remote sensing and micrograph imagery analysis involve the interpretation of complex data sets. In remote sensing, large sets of data are collected from satellites or aircraft and must be processed to extract useful information. If you take the example of SAR (Synthetic Aperture Radar) imagery, the data is complex to interpret because it includes multiple dimensions such as time, polarization, and frequency. These critical parameters can affect the resolution of the image, sensitivity to different surface features, and penetration depth. Analysis can become even more challenging due to external factors such as atmospheric interferences, signal noise, speckle, signal distortion, scattering effect, etc. Therefore, it requires specialized training and expertise to interpret.

Similarly, in micrograph imagery analysis, images captured through microscopes must be processed to identify structures and processes at the microscopic level. At such a small scale, even slight variations in lighting, color, and texture can significantly impact the interpretation of the image. This requires researchers to have a deep understanding of the subject matter, as well as advanced analytical tools and techniques to accurately interpret the data.

Deep learning has been instrumental in both fields in processing and interpreting large data sets. Neural networks are trained to identify patterns and features in the imagery, enabling insights to be derived that may not have been evident through traditional analysis techniques. Simply put, well-trained models are able to gradually crystalize the knowledge of experts and perform complex analyses at scale.

Handling large files

Electron micrographs offer an unparalleled level of detail owing to their remarkably high resolution, which typically exceeds 10K and even surpasses 100K in some cases. As a result, the resulting image files are quite large. Similarly, aerial and satellite images employed in geospatial applications are also characterized by their high resolution and data capacity. Despite the vastly different scales of observation between electron microscopy and remote sensing, the common thread binding them is the need to handle large data sets with exceptional resolution.

The most common format for storing electron micrographs is the tiled-tiff, a file format designed specifically for managing high-capacity images. By contrast, satellite and aerial photographs are typically saved in GEOTIFF, a file extension that includes geospatial metadata in addition to the tiled-tiff format.

Given the immense size and complexity of these high-resolution images, the human eye is ill-equipped to efficiently analyze them. The task can be likened to scouring the vast expanse of an ocean for a single object. Unfortunately, experts in the fields of natural science and geoinformatics still rely on their own labor and visual acuity to study these high-resolution images.

However, by utilizing machine learning techniques to automate the analysis of these images, practitioners can free themselves from the tedium of manual inspection. Yet, developing machine learning models to accurately analyze high-volume, high-resolution images presents a host of challenges, which require a specific set of expert skills.

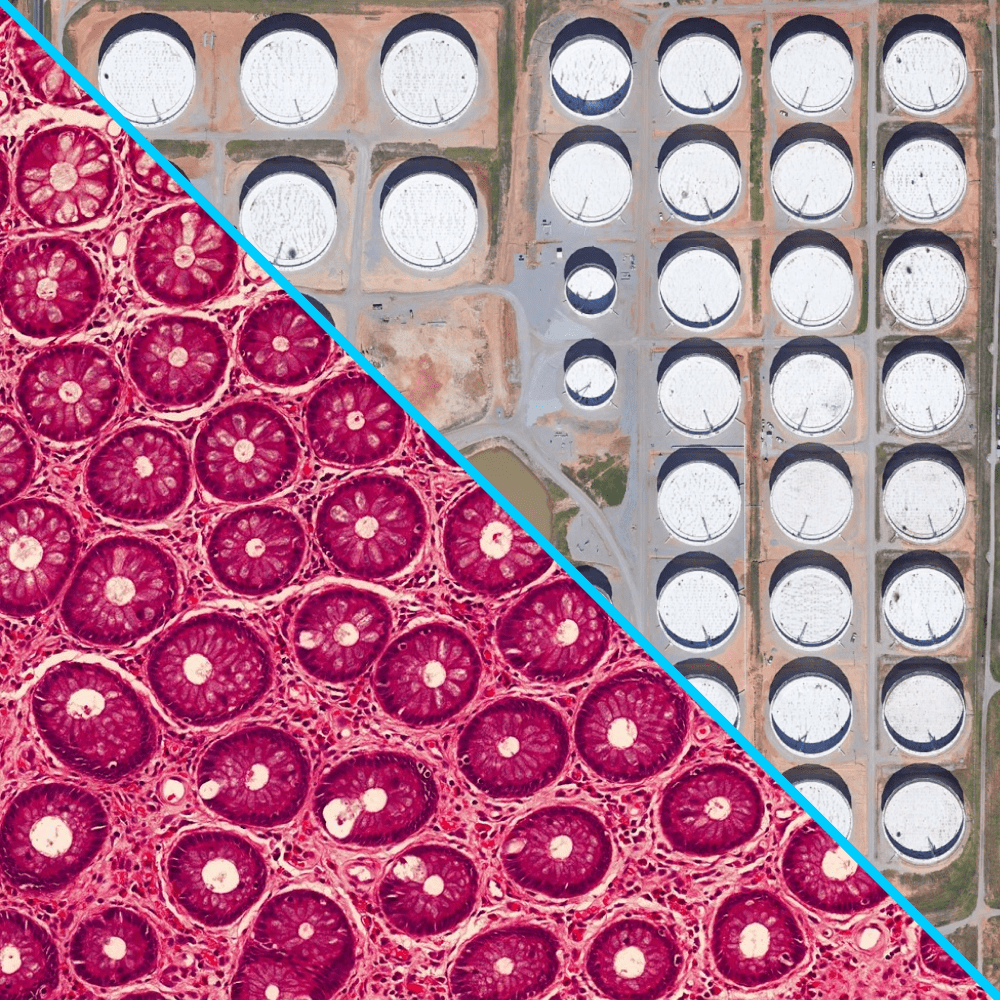

Object Detection

Object detection models are an essential tool for analyzing complex imagery in both remote sensing imagery analysis and micrograph imagery analysis. These models are used to identify and locate specific objects or regions of interest within an image, which is crucial for understanding the structure and function of different systems at both macro and micro scales.

The main goal of object detection in remote sensing is to automatically detect and identify objects of interest within satellite or aerial images. These objects can range from buildings, roads, vehicles, vegetation, and other land features.

In micrograph imagery analysis, object detection models are used to identify and analyze the structures and processes of individual cells and other microscopic structures. For example, researchers may use these models to detect and analyze specific features of a cell, such as the presence of organelles or the location of different proteins within the cell.

Deep learning has enabled object detection to be performed with a high degree of accuracy in both fields. Convolutional neural networks (CNNs) have been particularly effective in identifying objects within the imagery. These networks use filters to identify features in the imagery and can be trained to identify specific objects or structures.

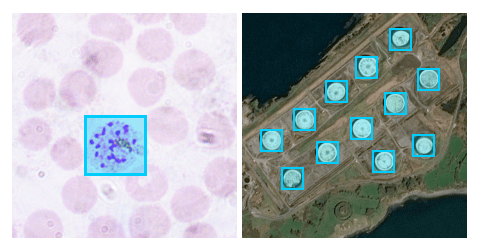

Object detection of malaria parasites in micrograph imagery on the left

and of oil tanks in remote sensing imagery on the right.

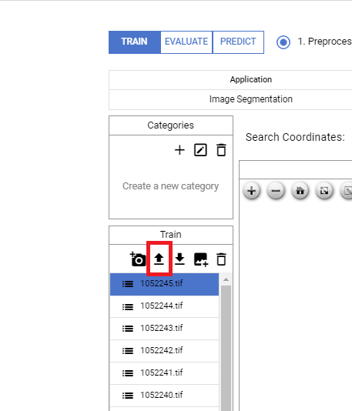

Image Segmentation

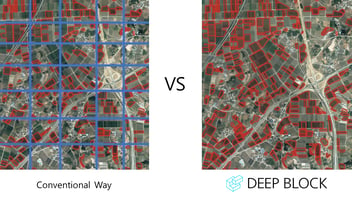

Image segmentation is the process of dividing an image into multiple segments, each containing a different object or structure. This technique is used in both remote sensing and micrograph imagery analysis to isolate specific features of interest.

In remote sensing, image segmentation can be used to identify land cover, vegetation types, and land use patterns across large geographic areas. This information can be used to monitor changes in the environment, track urbanization, and deforestation, as well as inform resource management decisions.

In micrograph imagery analysis, image segmentation models are used to study the structures and processes of individual cells and other microscopic structures. By segmenting an image into its individual components, researchers can identify and analyze different regions of interest within the image, such as the nucleus or cytoplasm of a cell.

One key similarity between these two fields is the use of deep learning techniques to improve the accuracy and efficiency of image segmentation. Neural networks, in particular, have proven to be highly effective at learning and extracting relevant features from complex imagery, leading to more accurate and efficient segmentation results.

In both remote sensing imagery analysis and micrograph imagery analysis, contextual information such as the spatial relationships between different objects or regions within the image can be used to guide the segmentation process and ensure accurate results.

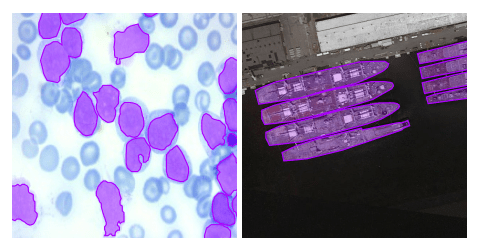

Image segmentation of cancerous cells in micrograph imagery

on the left and of warships in remote sensing imagery on the right.

How Deep Learning Enhances Analysis in Remote Sensing and Micrograph Imagery Analysis

Improved Accuracy

Deep learning has enabled remote sensing and micrograph imagery analysis to be performed with a higher degree of accuracy than ever before. Neural networks can identify patterns and features in the imagery that may not have been evident through traditional analysis techniques, leading to more accurate identification and classification of objects and structures. This has enabled us to gain a better understanding of the world around us, whether it's monitoring changes in the environment or identifying cellular structures that may have important implications in medicine.

Efficient Processing

Deep learning has also made processing large sets of data more efficient in both fields. Traditional analysis techniques can be time-consuming and require extensive manual effort. However, with deep learning, neural networks can quickly and accurately process large sets of data, reducing the time and effort required to analyze imagery.

This efficiency has enabled us to process larger sets of data than ever before, allowing for more comprehensive analysis and insights. For example, in remote sensing, this has enabled us to monitor changes in the environment on a global scale, while in micrograph imagery analysis, this has enabled us to analyze large sets of cellular images to gain insights into disease processes.

Enhanced Visualization

Deep learning has also enhanced visualization in both fields, making it easier to interpret and communicate data. Neural networks can be trained to identify specific objects and structures, enabling them to be highlighted and visualized in a way that is easy to understand.

For example, in remote sensing, this has enabled us to create detailed maps and visualizations of land cover and vegetation, making it easier to monitor changes over time. In micrograph imagery analysis, this has enabled us to visualize specific cellular structures, making it easier to identify abnormalities.